Content Moderation in Facebook

Content moderation is the process of screening content users post online by applying a set of pre-set rules or guidelines to see if it is appropriate or not [2]. Facebook is a social media platform that allows people to connect with friends, family and communities of people who share common interests [3]. As with many other popular social media platforms, Facebook has come up with an approach to moderate and control the type of content users see and engage with. Facebook has two main approaches when it comes to content moderation; they utilize both AI moderators and human moderators. The way Facebook moderates its content is through its community standards that lay out what they believe each post should follow [4]. There have been many instances where Facebook’s content moderation tactics have been a success, but also many instances where it has failed. The ethics behind Facebook’s content moderation approach has also been widely controversial from the mental health struggles human moderators are forced to deal with to questioning how the AI is trained to flag inappropriate content.

Overview/Background

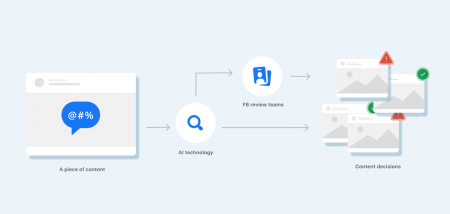

When it comes to content moderation, Facebook utilizes both AI moderators as well as human moderators. Posts that violate the community standards are deemed inappropriate. This includes everything from spam to hate speech to content that involves violence [4]. Content that is deemed inappropriate is found through the AI moderators, the human moderators are responsible for posts that the AI is not quite sure about and are vital to improving the machine learning model the AI technology uses [5].

AI Moderators

Facebook filters all posts initially through its AI technology. Facebook’s AI technology starts with building machine learning (ML) models that have the ability to analyze the content of a post or recognize different items in a photo. These models are used to determine whether what the post contains fits within the community standards or if there is a need to take action on the post, such as removing it [4].

Sometimes, the AI technology is unsure if content violates the community standards so it will send over the content to the human review teams. Once the review teams make a decision, the AI technology is able to learn and improve from that decision. This is how technology is trained over time and gets better [4].

Oftentimes, Facebook’s community standard policies change to keep up with changes in social norms, language and our products and services. This requires the content review process to always be evolving and changing [4].

In the past, posts were viewed in the order they were reported, however, Facebook says it wants to make sure the most important posts are seen first. They decided to change their machine learning algorithms to prioritize more severe or harmful posts [5]. Facebook has recently reworked how their deem posts a violation. They used to have separate classification systems that looked at individual parts of a post. They split it up into content type and violation type and would have many classifiers look at photos and text. Facebook decided it was too disconnected and created a new approach. Now, Facebook says their machine learning algorithm works through a holistic approach or Whole Post Integrity Embeddings (WPIE). It was trained on a very widespread selection of violations and has greatly improved [6]

Human Moderators

Facebook filters all posts through its AI technology initially, but it is passed through to their human moderators if Facebook’s AI technology decides that certain pieces of content require further review. It will send the content to human review teams to take a closer look and make a decision on whether or not to remove the post. So, in other words, the human moderators get the final say [4].

There are about 15,000 Facebook content moderators employed throughout the world. Their main job is to sort through the AI flagged posts and make decisions about whether or not they violate the company’s guidelines [5]. There are many companies that Facebook has worked with to help moderate content. Some of these companies included Cognizant, Accenture, Arvato, and Genpact [1].

Since Facebook is one of the largest social media sites with millions(??) of users, it is often when the human moderators are overwhelmed. Many human moderators have also expressed their struggles with mental health issues as a result of working that job. See ethical issues for more detail

Individual Moderation

Individual users on facebook are able to moderate or control the type of posts they see. These posts may not violate the community standards Facebook has created, but for whatever reason, the user does not want to. Users are able to block certain words from being seen or block entire users entirely. While this option could be dangerous and create ______, allowing the opportunity for users to moderate the content they see is important to Facebook [7]

Instances

Covid

Backlash around Free Speech

Lawsuits

Language Gaps

Donald Trump

Ethical Concerns

Mental Health

Moderation rules/classifications

LGBTQ community

Conclusion

References

- ↑ 1.0 1.1 Wong, Q. (2019, June). Facebook content moderation is an ugly business. Here's who does it. CNET. Retrieved from https://www.cnet.com/tech/mobile/facebook-content-moderation-is-an-ugly-business-heres-who-does-it/

- ↑ Roberts, S. T. (2017). Content moderation. In Encyclopedia of Big Data. UCLA. Retrieved from https://escholarship.org/uc/item/7371c1hf

- ↑ Facebook. Meta. Retrieved from https://about.meta.com/technologies/facebook-app/

- ↑ 4.0 4.1 4.2 4.3 4.4 4.5 How does facebook use artificial intelligence to moderate content? Facebook Help Center. Retrieved from https://www.facebook.com/help/1584908458516247

- ↑ 5.0 5.1 5.2 Vincent, J. (2020, November 13). Facebook is now using AI to sort content for quicker moderation. The Verge. Retrieved from https://www.theverge.com/2020/11/13/21562596/facebook-ai-moderation

- ↑ New progress in using AI to detect harmful content. Meta AI. Retrieved from https://ai.facebook.com/blog/community-standards-report/

- ↑ Meta Business Help Center. Moderation. Meta. Retrieved from https://www.facebook.com/business/help/1323914937703529