Computer Vision

Computer vision is an interdisciplinary scientific field that looks to enable computers to gain high-level understanding from digital images and videos. The field seeks to understand and automate tasks like those accomplished every day by the human eye. [5]

There are several applications of this field, such as in medicine, machine vision, military, autonomous vehicles, tactile feedback, and more. Tasks for computer vision typically include recognition, identification, detection, scene reconstruction, and image restoration. The scope of this MediaWiki article covers the history, application, and ethical implications of computer vision.

Definition

Computer vision is a field of programming that aims to have computers process and understand the contents of an image like a human brain. [5] Computer vision seeks to convert numerical data, represented as the pixels on a screen, and analyze patterns in a way such that higher-level information can be extracted and understood. It can be summarized as the quantification and automation of the human visual system. [1] Computer vision primarily relies on probabilistic and physics-based models to decipher the inputs, and is still very error-prone. [2]

History

Pre 2000s

Computer vision was first theorized by MIT professor Dr. Lawrence Roberts in his Ph.D. dissertation in 1963. [40] It outlined the extraction of information from known three-dimensional objects from a perspective projection. [3] The field became quite popular within the scientific community as it was showing signs of new promising technology. Researchers were able to raise millions of dollars to pursue further experimentation. [54] In 1966, Marvin Minsky co-founded the MIT AI Lab and got approval to hire a graduate student, Gerald Sussman. Gerald was assigned to research how to connect a camera and a computer, so the computer could describe the images it saw. By the end of the summer, Gerald realized that this challenge was a lot more complicated than he originally perceived it to be. [52] By the mid-1970s, researchers and sponsors began to lose faith in computer visions and began to pull funding. This began a period known as the A. I winter, where advancements in artificial intelligence were significantly slowed down. [50] The process was revisited by David Marr in 1978, who developed techniques to segment and detect edges within images. [4] After Marr's discovery, image recognition was primarily calculated on a basis of perspective understanding as opposed to holistic geometric extrapolation. [5]

ImageNet Large Scale Visual Recognition Challenge

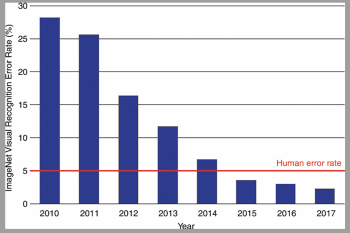

Prior to the 2000s and even a decade into the new century, computer vision was making progress but nothing too notable. It was not until the fruition of the ImageNet Large Scale Visual Recognition Challenge (ILSVRC), that computer vision would begin to see huge improvements. [54] The ILSVRC is a competition in which researchers form teams to compete in an image recognition contest and are judged based on the accuracy of their model. [53] In the early stages of the competition, around 2010, the winner of the competition was getting an error rate of around 28%. [55] Then in 2012, a research team from the University of Toronto created a model called AlexNet that resulted in a huge reduction in error rate to 16%. [55] This is because they used a new technique in artificial intelligence called Deep Learning. This method would become the new standard for computer vision. [51] After further research in Deep Learning, the ILSVRC was able to fall to an error rate of 2.3%. To put this in perspective, this is well below the human error rate for image recognition. This is a cornerstone in modern technology such as autonomous vehicle navigation and medical imaging improvements.

Applications

Autonomous Vehicles

Autonomous vehicles are vehicles that are self-guided to some degree. They can range from simply enhancing driver visual stimuli to fully planning and executing a navigation path. [6] Autonomous vehicles can take the form of cars, small delivery robots, submersibles, aircraft, rovers, rockets, and missiles, among others. [7] [8] [9] [10]

Autonomous vehicles have many applications as well. Unmanned aerial vehicles have been used for depositing payloads in urban spaces as well as detecting wildfires. [11] [12] Autonomous submarines have been mapping the ocean and collecting valuable scientific data for a long time and new uses are being discovered. [1] [13] Companies like Waymo are on the frontlines in developing consumer autonomous driving technology. [41]

Autonomous Vehicles - Levels of Autonomy [42]

With autonomous cars being the most consumer-facing example, proactive legislation and standards committees have subjected corporate players to intense scrutiny and classifications. SAE International has defined 6 different levels of autonomy within vehicles. Level 0 is defined as “No Automation,” or a standard vehicle. Level 1 is defined as simple steering and acceleration assistance, while the majority of control is still up to the driver. Level 2 adds assisted deceleration to the list of autonomous features, but the majority of control is still up to the driver. Levels 0-2 are classified as primarily human-controlled, whereas levels 3-5 are classified as primarily automated driving.

Level 3 is the first level where the vehicle can operate without driver assistance, but its defining characteristic is that it requires the intervention of the driver when requested. Level 4 can perform entirely autonomously, such that the driver is never asked to intervene except in the case of unknown roadway conditions. Level 5 automation builds upon level 4 in that it can operate entirely autonomously in any given conditions. Consumer autonomous vehicles currently range from levels 0-3 autonomy, though levels 4 and 5 are highly sought after and rapidly being developed.

Total Miles Driven by Autonomous Vehicles [43]

| Company | Total Cars | Miles Driven in 2019 | Disengagements |

|---|---|---|---|

| Waymo | 153 | 1.45 Million | 1 per 11,017 miles |

| GM Cruise | 233 | 831,040 | 1 per 7635 miles |

| Apple | 66 | 7544 | 1 per 1.1 miles |

| Lyft | 20 | 42,930 | 1 per 26 miles |

| Aurora | No Data | 39,729 | 1 per 94 miles |

| Nuro | 33 | 68,762 | 1 per 2022 miles |

| Pony.ai | 22 | 174,845 | 1 per 6476 miles |

| Baidu | 4 | 108,300 | 1 per 18,182 miles |

| Zoox | 58 | 67,015 | 1 per 1596 miles |

Disengagements are circumstances in which the human driver must take control of the vehicle due to a failure in technology or when necessitated for the safe operation of the vehicle.

Surveillance and Facial Recognition

Computer vision has seen a marked rise in applications involving surveillance systems, as automated recognition of targets rapidly accelerates security and threat prevention measures within both private and public environments. In the United States, the Federal Bureau of Investigation (FBI) and police department databases have facial recognition information of over 117 million citizens. [15] The majority of these data points are retrieved from driver’s licenses and mugshot records. The FBI’s Next Generation Identification facial recognition program is an extension of the Integrated Automated Fingerprint Identification System, and has an accuracy of about 86%. [16] It has been used for the automated recognition of criminals by cross-referencing images with the aforementioned database.

Facial recognition and surveillance is prevalent throughout China as well. First enacted in 2005, China’s Skynet system is the largest surveillance system in the world with more than 400 million CCTV cameras installed across the country that keep tabs on nearly every citizen. [17] [18] The system is used for autonomously identifying criminals, tracking citizens’ locations, and controlling civil unrest through apprehension of dissidents. [19] Surveillance techniques have expanded since then to utilize drones which double as law enforcement aid. [20]

Biometric Recognition

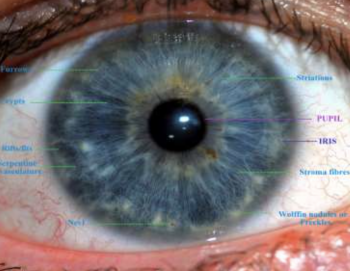

Biometric markers are commonly seen in conjunction with computer vision technology. [44] Iris recognition/iris scanning uses visible and near-infrared light to take a high-contrast photo of someone’s iris. The scan measures the iris pattern in the colored part of the eye, although the color itself is not a part of the scan. A bit pattern can then be created and compared to stored templates for verification. [45] The unique features of the iris allow this form of recognition to be a secure form of biometric recognition and authentication. The iris is a relatively constant biometric over time because the unique features will remain intact even through medical and surgical procedures. Due to the size of the iris, the subject must be no further than a few meters from the camera. Additionally, an iris scan is limited by lighting differences, image quality, defocus, and occlusion of eyelids and eyelashes. [46]

Another form of biometric recognition is a retinal scan. The vascular configuration of the retina creates a unique signature. [47] Similar to the iris scan, a light is shined into the eye to scan the blood vessels pattern. [44] Since the blood vessels are protected in the eye and are difficult to change or replicate, retinal scans are one of the more secure options for recognition technology. [2] [3] [47] Due to the nature of the scan, it is less desirable and not as commonly used. [44]

Emergent biometric technology is gait recognition. [4] [48] This technology can differentiate people by the way they walk. Obtaining gait metrics is similar to the acquisition of facial recognition. [47] Gait is more advantageous as compared to metrics like iris and retinal scans because it is less invasive, and the subject can be further away. [48] Gait is not a permanent metric because it is a behavioral biometric and can change over time due to body weight or brain damage. [47] In a study done to improve the gait algorithm, researchers were able to achieve a recognition rate of 61% and 96% for two separate data sets. The researchers suggested that the difference in recognition rate in the two sets was limited by the distance of the subject to the camera. [49]

Consumer Applications

Facial recognition has been recently implemented into every day consumer products,. Notable examples are Apple’s Face ID and Snapchat’s lenses feature. These features rely on a three-dimensional mask overlaid on a subject's face, constructed by recognizing facial features to align the mask in key areas. Apple’s technology utilizes infrared projection to enable use in the dark. [14]

Computer vision has been adopted in the organization and classification of photos and videos. One such product that has adopted computer vision algorithms for consumer use is Google Photos. [31] Google Photos allows users to view their photos by any number of categorizations, whether it be by objects in the photo, events, pets, and even specific peoples' faces. [31] [32]

Another consumer application of computer vision is image restoration. Image restoration is the act of taking a corrupt or noisy image and estimating the clean, originally intended image. Corruption may come in many forms, including noise, lack of camera focus, and motion blur. This technology has significantly improved the quality of amateur photography. [37] [38] Image restoration attempts to remove these defects. While this process can be done manually with products like Adobe Photoshop, other products (such as Movavi) offer a degree of automation afforded by computer vision technology. [39]

Ethics

Autonomous Vehicles

As autonomous navigation systems approach the consumer market, a number of ethical dilemmas are introduced. Like human drivers, autonomous vehicles have to be prepared for emergency situations and act accordingly. One such dilemma, known as the Trolley Problem, is a situation where the vehicle has no other option than to cause an accident. [21] The dilemma posits the decision to either kill a bystander or swerve into a wall and kill the passenger. While contrived and unlikely to occur by conventional means, this dilemma demonstrates the difficulty of translating human decision-making skills into code.

Variations of the trolley problem add further complexity to the computation behind autonomous navigation. The Massachusetts Institute of Technology’s Moral Machine experiment explores this situation using data collected from around the world using societal preferences in who to spare. This study concludes that society would foremost prefer to spare younger pedestrians when faced with the previous situation. Other results include sparing pedestrians over passengers, sparing more characters over fewer, sparing humans over pets, and sparing higher status individuals over the lower class. [22]

Surveillance and Facial Recognition

Facial recognition in surveillance has attracted much debate. On one hand, facial recognition technology has led to the successful arrest of numerous criminals. [23] However, it is an imperfect technology and has caused false imprisonment over inaccurate identifications. [24] Additionally, facial recognition algorithms do not operate with the same efficacy for all races and genders. In the case of white males, facial recognition algorithms can have accuracies up to 90%, while it can drop by as much as 34% for Black females. [25]

Facial recognition has also been used in China to track Uighurs, where there is currently tension between the ethnic minority and the Chinese government. [26] The technology has been used to determine the locations of Uighur citizens so that the Chinese government can relocate them to political re-education camps. [27] The United States has denounced the practice, calling it ‘genocide’ and imposing sanctions for their actions [29], as these political re-education camps have been labelled internment camps and practice forced sterilization. [28] This has sparked further debate about the use of facial recognition technology for extracting ethnic features. [30]

Consumer Applications

There are concerns of privacy regarding the information that can be collected from consumer products. Companies like Facebook , Google, Adobe and Apple all use Computer Vision in their various consumer products which are used expansively in the US and globally.. [33] [34] To alleviate these privacy and ethics concerns, companies refer their users to their privacy statements and give their users assurances of data privacy or anonymity. For example, Google Photos now promises its users that "face groups and labels in your account are only visible to you." [35] Apple tells its users that Face ID "carefully safeguards the privacy and security of a user’s biometric data." [36] However, the general public does not often read the terms and conditions in their entirety. [6]

Potential for Privacy Violations

As technology involving computer vision improves, more ethical concerns will be raised. In 2016, the Massachusetts Institute of Technology, Northeastern University, and Qatar Computing Research Institute developed a computer-vision based software called "Face-to-BMI" where, by seeing images of a person, the software could infer the person's BMI[7]. There is potential abuse with this computer vision software in healthcare insurance. Insurance companies could use computer vision to assess the health of applicants through photos, and increase premiums in accordance to conditions found in the photo, such as BMI[8]. Computer vision also has security implications. When surveillance systems are improved and more widespread, potential security breaches are all the more dangerous: black hat hackers could view sensitive data, such as a government official typing in a password[9]. There are also risks in privacy violations for non-users of a service, as computer vision may not be able to differentiate between users and non-users. Sensitive information could be extracted from the non-user without their consent by being in a photo or video of a consenting user[10].

Botting

Computer vision technology improvements have weakened bot detection, and so the tests given to differentiate authentic users from automatic bots are becoming more and more difficult. Google used one of its machine learning algorithms to solve distorted text CAPTCHAs, and the computer was able to decipher the text 99.8 percent of the time, while humans only got it right 33 percent of the time[11]. If the technology for computer vision becomes widely available, the difficulty of parsing bots from authentic users will be too difficult a task for many companies, meaning there will be quality of life and ethical ramifications. An example of this would be scalper bots, where bots automatically secure high demand goods such as concert tickets, then the bot owner can resell them with an upcharge on the price.

References

1. Medioni, G., & Kang, S. B. (2005). Emerging topics in computer vision. In Emerging topics in computer vision. Upper Saddle River: Prentice Hall. doi:https://sites.rutgers.edu/peter-meer/wp-content/uploads/sites/69/2018/12/rotechcv.pdf

2. Szeliski, R. (2010). Computer vision: Algorithms and applications. In Computer Vision: Algorithms and Applications. Springer.

3. Roberts, L. G. (1980). Machine perception of three-dimensional solids. New York: Garland Pub.

4. Marr, D., & Nishihara, H. (1978). Representation and Recognition of the Spatial Organization of Three-Dimensional Shapes (Vol. 200). Proceedings of the Royal Society of London. doi:http://www.cog.brown.edu/courses/cg195/pdf_files/CG195MaNi78.pdf

5. Huang, T. (1996-11-19). Vandoni, Carlo, E (ed.). [http://cds.cern.ch/record/400313/files/p21.pdf Computer Vision : Evolution And Promise[ (PDF). 19th CERN School of Computing. Geneva: CERN. page 1. doi:10.5170/CERN-1996-008.21. ISBN 978-9290830955.

6. Wen, D., Yan, G., Zheng, N., & Shen, L. (2011). Toward cognitive vehicles. Retrieved from https://ieeexplore.ieee.org/abstract/document/5898448?casa_token=9fQnAkrg9dcAAAAA%3AVmBTXXOtHLmy0NHCCbR7zk0nyRqrAe6EFMXvEVUyRYyjU7gNM_BDxzxXxHPQCI50vvxikK2zdw

7. Frew, E., McGee, T., Kim, Z., Xiao, X., & Jackson, S. (2004, March 6). Vision-based road-following using a small autonomous aircraft. Retrieved from https://ieeexplore.ieee.org/abstract/document/1368106?casa_token=ydmCwxajiuQAAAAA%3AgdOfRAaYq95QsY9nPEc2JT8LI4c5QOeHifDLt0ZRy9jMFSZors5Bzb70J8lPVg1e0ql_DrpyDA

8. Frontiers of engineering: Reports on leading-edge engineering from the 2016 symposium. (2017). Washington, DC: The National Academies Press.

9. Singh, L. (2004). Autonomous missile avoidance using nonlinear model predictive control. AIAA Guidance, Navigation, and Control Conference and Exhibit. doi:10.2514/6.2004-4910

10. Jennings, D., & Figliozzi, M. (2019). Study of Sidewalk autonomous delivery robots and their potential impacts on Freight efficiency and travel. Transportation Research Record: Journal of the Transportation Research Board, 2673(6), 317-326. doi:10.1177/0361198119849398

11. Hadi, G. S., Varianto, R., Trilaksono, B. R., & Budiyono, A. (2015). Autonomous UAV system development for Payload DROPPING MISSION. The Journal of Instrumentation, Automation and Systems, 1(2), 72-77. doi:10.21535/jias.v1i2.158

12. Kumar, M., Cohen, K., & HomChaudhuri, B. (2011). Cooperative control of multiple Uninhabited aerial vehicles for monitoring and fighting wildfires. Journal of Aerospace Computing, Information, and Communication, 8(1), 1-16. doi:10.2514/1.48403

13. Blidberg, D. R. (2001). The development of autonomous underwater VEHICLES (auv); A Brief Summary. Retrieved from https://auvac.org/files/publications/icra_01paper.pdf

14. Mandal, J. K., & Bhattacharya, D. (2020). Emerging Technology in Modelling and Graphics Proceedings of IEM Graph 2018. Singapore: Springer Singapore. doi:https://link.springer.com/content/pdf/10.1007/978-981-13-7403-6.pdf

15. Garvie, C., Bedoya, A., & Frankle, J. (2016, October 18). The perpetual Line-Up. Retrieved from https://www.perpetuallineup.org/

16. Goodwin, G. (n.d.). FACE RECOGNITION TECHNOLOGY DOJ and FBI Have Taken Some Actions in Response to GAO Recommendations to Ensure Privacy and Accuracy, But Additional Work Remains (United States, United States Government Accountability Office).

17. Wang, W. (n.d.). Dark data. Retrieved from https://mfadt.parsons.edu/darkdata/surveillance-madness.html

18. Ng, A. (2020, August 11). China tightens control with facial Recognition, public shaming. Retrieved from https://www.cnet.com/news/in-china-facial-recognition-public-shaming-and-control-go-hand-in-hand/#:~:text=In%20China%2C%20no%20one%20is,jaywalking%20on%20a%20digital%20billboard.

19. Walton, G. (2001). China's golden shield: Corporations and the development of surveillance technology in the People's Republic of China. Montreal: Rights & Democracy, International Centre for Human Rights and Democratic Development.

20. Chen, L. (2019, September 06). Traffic police in China are using drones to give orders from above. Retrieved from https://www.scmp.com/news/china/society/article/3026051/traffic-police-china-are-using-drones-give-orders-above

21. Thomasson, A. L. (2008). General topic: Intentionality and phenomenal consciousness. In General topic: Intentionality and phenomenal consciousness. Peru, IL: Hegeler Inst.

22. Awad, E., Dsouza, S., Kim, R., Schulz, J., Henrich, J., Shariff, A., . . . Rahwan, I. (2018, October 24). The moral machine experiment. Retrieved from https://www.nature.com/articles/s41586-018-0637-6.

23. Sebastian Anthony - Jun 6, 2. (2017, June 06). UK police arrest man via Automatic face-recognition tech. Retrieved from https://arstechnica.com/tech-policy/2017/06/police-automatic-face-recognition/

24. Hill, K. (2020, December 29). Another arrest, and jail time, due to a BAD facial recognition match. Retrieved from https://www.nytimes.com/2020/12/29/technology/facial-recognition-misidentify-jail.html#:~:text=In%202019%2C%20a%20national%20study,on%20bad%20facial%20recognition%20matches.

25. Najibi, A. (2020, October 26). Racial discrimination in face recognition technology. Retrieved from https://sitn.hms.harvard.edu/flash/2020/racial-discrimination-in-face-recognition-technology/

26. Fitzgerald, G. (2020). Chinese authoritarianism and the systematic suppression of the Uighur ethnic minority. Retrieved from https://scarab.bates.edu/asian_studies_theses/7/

27. Raza, Z. (2019). CHINA’S ‘POLITICAL RE-EDUCATION’ camps OF XINJIANG’S UYGHUR MUSLIMS. Asian Affairs, 50(4), 488-501. doi:10.1080/03068374.2019.1672433

28. Ramzy, A., & Buckley, C. (2019, November 16). 'Absolutely no mercy': Leaked files expose how china organized mass detentions of muslims. Retrieved from https://www.nytimes.com/interactive/2019/11/16/world/asia/china-xinjiang-documents.html

29. Wong, E., & Buckley, C. (2021, January 19). U.S. says China's repression of Uighurs Is 'Genocide'. Retrieved from https://www.nytimes.com/2021/01/19/us/politics/trump-china-xinjiang.html

30. X. -d. Duan, C. -r. Wang, X. -d. Liu, Z. -j. Li, J. Wu and H. -l. Zhang, "Ethnic Features extraction and recognition of human faces," 2010 2nd International Conference on Advanced Computer Control, Shenyang, China, 2010, pp. 125-130, doi: 10.1109/ICACC.2010.5487194.

31. Google. (2021). "Get started with Google Photos". Google Photos Help. Retrieved March 12, 2021.

32. Google. (2021). "Search by people, things & places in your photos". Google Photos Help. Retrieved March 12, 2021.

33. Luckerson, V. (2017, May 25). "Why Google Is Suddenly Obsessed With Your Photos". The Ringer. Retrieved March 12, 2021.

34. Toussaint, K. (2017, September 19). "Apple’s face recognition could pose privacy concerns, experts say". Metro US. Retrieved March 19, 2021.

35. Google. (2021). "Search by people, things & places in your photos". Google Photos Help. Retrieved March 12, 2021.

36. Apple. (2021, February 18). "Touch ID and Face ID security". Apple Support. Retrieved March 19, 2021.

37. Castleman, K. R. (1996). Digital Image Processing. Prentice Hall.

38. Jain, A. K. (1989). Fundamentals of Digital Image Processing. Prentice Hall.

39. Movavi. (2021). "Movavi Picverse". Movavi. Retrieved March 19, 2021.

40. Roberts, L. G. (1963). "Machine perception of three-dimensional solids" (dissertation). Institute of Technology, Cambridge, MA. Retrieved March 18, 2021.

41. Waymo. (2021). "Home | Waymo". Waymo. Retrieved March 19, 2021.

42. S. (2020, May 15). SAE J3016 Automated-driving graphic. Retrieved from https://www.sae.org/news/2019/01/sae-updates-j3016-automated-driving-graphic

43. Wiggers, K. (2020, February 27). California DMV Releases autonomous vehicle disengagement reports for 2019. Retrieved from https://venturebeat.com/2020/02/26/california-dmv-releases-latest-batch-of-autonomous-vehicle-disengagement-reports/

44. Woodward, J., Horn, C., Gatune, J., Thomas, A., & Ca, R. (2003, January 01). Biometrics: A look at facial recognition. Retrieved March 31, 2021, from https://apps.dtic.mil/sti/citations/ADA414520

45. Sheard, N., Schwartz, A., Kelley, J., Hussain, S., & Mir, R. (2019, October 26). Iris recognition. Retrieved March 31, 2021, from https://www.eff.org/pages/iris-recognition

46. Sehrawat, J., & Sankhyan, D. (2016, September 05). Iris patterns as a Biometric tool for FORENSIC Identifications: A review. Retrieved April 01, 2021, from https://www.ipebj.com.br/bjfs/index.php/bjfs/article/view/620

47. Delac, K., & Grgic, M. (2004, November 15). A survey of biometric recognition methods. Retrieved March 31, 2021, from https://ieeexplore.ieee.org/abstract/document/1356372?casa_token=Yolp5tZ9Bp0AAAAA%3ANDTXkKnYDywbUFqiKVaIL8XZ__P-1xp_majOziHWLTG1wTRGCuyC7JwSgFGYkpJ1l39oon3Pjc8

48. Nixon, M., Carter, J., Nash, J., Huang, P., Cunado, D., & Stevenage, S. (1999, January 01). Automatic gait recognition. Retrieved March 31, 2021, from https://digital-library.theiet.org/content/conferences/10.1049/ic_19990573

49. Zhang, R., Vogler, C., & Metaxas, D. (2005). Human gait recognition. Retrieved March 31, 2021, from https://ieeexplore.ieee.org/abstract/document/1384807

50. John Markoff. (2014, Dec. 15). Innovators of Intelligence Look to Past. New York Times. Retrieved April 07, 2021, from https://www.nytimes.com/2014/12/16/science/paul-allen-adds-oomph-to-ai-pursuit.html

51. Alex Krizhevsky., Ilya Sutskever., Geoffrey E. Hinton. (2012). ImageNet Classification with Deep Convolutional Neural Networks. The University of Toronto. Retrieved April 07, 2021, from https://papers.nips.cc/paper/2012/file/c399862d3b9d6b76c8436e924a68c45b-Paper.pdf

52. Gerald Sussman. (2020). Gerald Sussman. Massachusetts Institute of Technology. Retrieved April 07, 2021, from https://www.csail.mit.edu/person/gerald-sussman

53. Olga Russakovsky, Jia Deng, Hao Su, Jonathan Krause, Sanjeev Satheesh, Sean Ma, Zhiheng Huang, Andrej Karpathy, Aditya Khosla, Michael Bernstein, Alexander C. Berg, Li Fei-Fei. (2015). ImageNet Large Scale Visual Recognition Challenge. Cornell University. Retrieved April 07, 2021, from https://arxiv.org/abs/1409.0575

54. Motion Metrics. (2021). How Artificial Intelligence Revolutionized Computer Vision: A Brief History. Motion Metrics. Retrieved April 07, 2021, from https://www.motionmetrics.com/how-artificial-intelligence-revolutionized-computer-vision-a-brief-history/#:~:text=Computer%20vision%20began%20in%20earnest,that%20could%20transform%20the%20world

55. Langlotz, Curtis & Allen, Bibb & Erickson, Bradley & Kalpathy-Cramer, Jayashree & Bigelow, Keith & Cook, Tessa & Flanders, Adam & Lungren, Matthew & Mendelson, David & Rudie, Jeffrey & Wang, Ge & Kandarpa, Krishna. (2019). A Roadmap for Foundational Research on Artificial Intelligence in Medical Imaging. Research Gate. Retrieved April 07, 2021, from https://www.researchgate.net/figure/Error-rates-on-the-ImageNet-Large-Scale-Visual-Recognition-Challenge-Accuracy_fig1_332452649- ↑ Suberg, Lavinia, Wynn, Russell B, Kooij, Jeroen van der, Fernand, Liam, Fielding, Sophie, Guihen, Damien, Gillespie, Douglas, et al. (2014). Assessing the potential of autonomous submarine gliders for ecosystem monitoring across multiple trophic levels (plankton to cetaceans) and pollutants in shallow shelf seas. Methods in oceanography (Oxford), 10, 70–89. Elsevier B.V.

- ↑ CHELURI, TRIVIKRAM, SUSANNA, SAMARPITHA, K, MADHAVI, & DIANA, MOSES. (2017). EVALUATION OF HYBRID FACE AND VOICE RECOGNITION SYSTEMS FOR BIOMETRIC IDENTIFICATION IN AREAS REQUIRING HIGH SECURITY. i-manager’s Journal on Pattern Recognition, 4(3), 9. Nagercoil: iManager Publications.

- ↑ Majumder, Swanirbhar, Jilenkumari Devi, Kharibam, & Sarkar, Subir Kumar. (2013). Singular value decomposition and wavelet-based iris biometric watermarking. IET biometrics, 2(1), 21–27. article, HERTFORD: The Institution of Engineering and Technology.

- ↑ Kairinos, Nikolas. (2019). The integration of biometrics and AI. Biometric technology today, 2019(5), 8–10. Elsevier Ltd.

- ↑ Sussman, Bruce. “Self-Driving Cars: The Ethics of Who Should Die in a Crash.” Cybersecurity Conferences & News, 30 Oct. 2018, www.secureworldexpo.com/industry-news/self-driving-cars-ethics-pros-cons.

- ↑ Steinfeld, Nili. (2016). “I agree to the terms and conditions”: (How) do users read privacy policies online? An eye-tracking experiment. Computers in human behavior, 55, 992–1000. OXFORD: Elsevier Ltd.

- ↑ Kocabey, Enes & Camurcu, Mustafa & Ofli, Ferda & Aytar, Yusuf & Marín, Javier & Torralba, Antonio & Weber, Ingmar. "Face-to-BMI: Using Computer Vision to Infer Body Mass Index on Social Media." 9 Mar. 2017

- ↑ M. Skirpan and T. Yeh, "Designing a Moral Compass for the Future of Computer Vision Using Speculative Analysis," 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 2017, pp. 1368-1377, doi: 10.1109/CVPRW.2017.179.

- ↑ M. Skirpan and T. Yeh, "Designing a Moral Compass for the Future of Computer Vision Using Speculative Analysis," 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 2017, pp. 1368-1377, doi: 10.1109/CVPRW.2017.179.

- ↑ Ahmed, Chevalier, Dufresne-Camaro. "Computer Vision Applications and their Ethical Risks in the Global South." 25, Jan. 2020

- ↑ Dzieza, Josh. “Why CAPTCHAs Have Gotten so Difficult.” The Verge, The Verge, 1 Feb. 2019, www.theverge.com/2019/2/1/18205610/google-captcha-ai-robot-human-difficult-artificial-intelligence.