Difference between revisions of "Parental Controls"

| Line 1: | Line 1: | ||

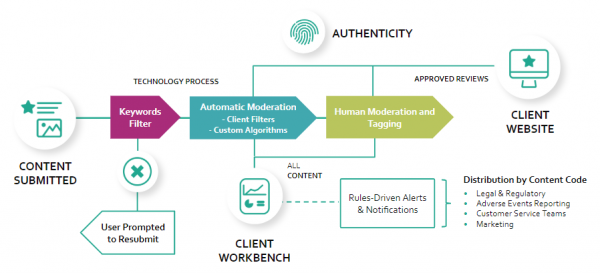

[[File:Moderation workflow.png|600px|thumbnail|Archetypal Content Moderation Workflow]] | [[File:Moderation workflow.png|600px|thumbnail|Archetypal Content Moderation Workflow]] | ||

{{Nav-Bar|Topics#A}}<br> | {{Nav-Bar|Topics#A}}<br> | ||

| − | ''' | + | '''Parental control software''' is a kind of software that provides parents with the power to control what sites their children can see, when internet access is available as well as track the internet internet history of their children. Before the widespread use of the internet, children did not have the access to information that they do now. For example, explicit music came in CD’s with large warning signs which made it very straightforward for parents to see what content their children were consuming. Now that many children have access to the internet, the way in which parents monitor the content their children see has changed as well. Several companies have entered the business of parental control software as it is becoming widely adopted by parents worldwide. Increasingly, concerns are being raised in academia about the potential for abuse of parental control software by parents given the omnipresence that the software allows parents. |

==Overview== | ==Overview== | ||

Revision as of 16:14, 12 March 2021

Parental control software is a kind of software that provides parents with the power to control what sites their children can see, when internet access is available as well as track the internet internet history of their children. Before the widespread use of the internet, children did not have the access to information that they do now. For example, explicit music came in CD’s with large warning signs which made it very straightforward for parents to see what content their children were consuming. Now that many children have access to the internet, the way in which parents monitor the content their children see has changed as well. Several companies have entered the business of parental control software as it is becoming widely adopted by parents worldwide. Increasingly, concerns are being raised in academia about the potential for abuse of parental control software by parents given the omnipresence that the software allows parents.

Contents

Overview

Most types of moderation involve a top-down approach, where a moderator or small group of moderators are given discretionary power by a platform to approve or disapprove user-generated content. These moderators may be paid contractors or unpaid volunteers. A moderation hierarchy may exist or each moderator may have independent and absolute authority to make decisions.

In general, content moderation can be broken down into 6 major categories:[1]

- Pre-Moderation screens each submission before it is visible to the public. This creates a bottleneck in user-engagement, and the delay may cause frustration in the user-base. However, it ensures maximum protection against undesired content, eliminating the risk of exposure to unsuspecting users. It is only practical for small user communities otherwise the flow of content would be slowed down too much. It was common in moderated newsgroups on Usenet.[2] Pre-moderation provides a high control of what ends up visible to the public. This method is suited towards communities where child protection is vital.

- Post-Moderation screens each submission after it is visible to the public. While preventing the bottleneck problem, it is still impractical for large user communities due to the vast number of submissions. Furthermore, as the content is often reviewed in a queue, undesired content may remain visible for an extended period of time, drowned out by benign content ahead of it, which must still be reviewed. This method is preferable to pre-moderation from a user-experience perspective, since the flow of content has not been slowed down by waiting for approval.

- Reactive moderation reviews only that content which has been flagged by users. It retains the benefits of both pre- and post-moderation, allowing for real-time user-engagement and the immediate review of only potentially undesired content. However, it is reliant on user participation and is still susceptible to benign content being falsely flagged. Therefore it is most practical for large user communities which have a lot of user activity. Most modern social media platforms, including Facebook and YouTube, rely on this method. This method allows for the users themselves to be held accountable for any information available and for determining what should or should not be taken down. This method is more easily scalable to a large number of users than both pre and post-moderation.

- Distributed moderation is an exception to the top-down approach. It instead gives the power of moderation to the users, often making use of a voting system. This is common on Reddit and Slashdot, the latter also using a meta-moderation system, in which users also rate the decisions of other users.[3] This method scales well across user-communities of all sizes, but also relies on users having the same perception of undesired content as the platform. It is also susceptible to groupthink and malicious coordination, also known as brigading.[4]

- Automated moderation is the use of software to automatically assess content for desirability. It can be used in conjunction with any of the above moderation types. Its accuracy is dependent on the quality of its implementation, and it is susceptible to algorithmic bias and adversarial examples[5]. Copyright detection software on YouTube and spam filtering are examples of automated moderation[6].

- No moderation is the lack of moderation entirely. Such platforms are often hosts to illegal and obscene content, and typically operate outside the law, such as The Pirate Bay and Dark Web markets. Spam is a perennial problem for unmoderated platforms, but may be mitigated by other methods, such as limited posting frequency and monetary barriers to entry. However, small communities with shared values and few bad actors can also thrive under no moderation, like unmoderated Usenet newsgroups.

Ethical Issues

The ethical issues regarding content moderation include how it is carried out, the possible bias of such content moderators, and the negative effects this kind of job has on moderators. The problem lies in the fact that content moderation cannot be carried out by an autonomous program since many cases are highly nuanced and detectable only by knowing the context and the way humans might perceive it. Not only is this job often ill-defined in terms of policy, content moderators are often expected to make very difficult judgments while being afforded very few to no mistakes.

Virtual Sweatshops

Often, companies outsource their content moderation tasks to third parties. This work cannot be done by computer algorithms because it is often very nuanced, which is where virtual sweatshops enter the picture. Virtual sweatshops enlist workers to complete mundane tasks in which they will receive small monetary reward for their labor. While some view this as a new market for human labor with extreme flexibility, there are also concerns with labor laws. There is not yet policy that exists on work regulations for internet labor, requiring teams of people overseas who are underpaid for the labor they perform. Companies overlook and often choose not to acknowledge the hands-on effort they require. Human error is inevitable causing concerns with privacy and trust when information is sent to these third-party moderators. [7]

Google's Content Moderation & the Catsouras Scandal

Google is home to a practically endless amount of content and information all of which is for the most part, not regulated. In 2006, a young teen in Southern California named Nikki Catsouras crashed her car, which resulted in her gruesome death and decapitation. On the scene, members of the police force were tasked with taking pictures of the scene. However, as a Halloween joke, a few of the members who took the photos sent them around to various friends and family members. The picture of Nikki's mutilated body was then passed around the internet and was easily accessible via Google. The Catsouras family was devastated that these pictures of their daughter were being seen and viewed by millions, and desperately attempted to get the photo removed from the Google platform. However, Google refused to comply with Catsouras plea. This is a clear ethical dilemma that involves content moderation as this picture was certainly not meant to be released to the public and was very difficult for the family, but because Google did not want to begin moderating specific content of their platform they did nothing. This brings up the ethical question of if people have "The Right to Be Forgotten" [8].

Another massive ethical issue with the moderation of content online is the fact that the owners of the content or platform decide what is and what is not moderated. Thousands of people and companies claim that Google purposefully moderates content that directly competes with their platform. Shivaun Moeran and Adam Raff are two computer scientists who together created an incredibly powerful search platform called Foundem.com. The website was helpful for finding any amounts of information, it was particularly helpful for finding the cheapest possible items being sold on the internet. The key to the site was a Vertical Search Algorithm, which as an incredibly complex computer algorithm that focuses on search. This vertical search algorithm was significantly more powerful than Google's search algorithm, which was a horizontal search algorithm. The couple posted their site and within the first few days experienced great success and many site visitors, however, after a few days the visitor rate significantly decreased. They discovered that their site had been pushed multiple pages back on Google. This is because it directly competed with the "Google Shopping" app that had been released by Google. Morean and Raff had countless lawsuits filed and met with people at Google and other large companies to figure out what the issue was and how they could get it fixed but were met with silence or ambiguity. Foundem.com never became the next big search algorithm, partly because of the ethical issues seen in content moderation by Google [9]

Psychological Effects on Moderators

Content moderation can have significant negative effects on the individuals tasked with carrying out the motivation. Because most content must be reviewed by a human, professional content moderators spend hours every day reviewing disturbing images and videos, including pornography (sometimes involving children or animals) gore, executions, animal abuse, and hate speech. Viewing such content repeatedly, day after day can be stressful and traumatic, with moderators sometimes developing PTSD-like symptoms. Others, after continuous exposure to fringe ideas and conspiracy theories, develop intense paranoia and anxiety, and begin to accept those fringe ideas as true.[10][11][12]

Further negative effects are brought on by the stress of applying the subjective and inconsistent rules regarding content moderation. Moderators are often called upon to make judgment calls regarding ambiguously-objectionable material or content that is offensive but breaks no rules. However, the performance of their moderation decisions is strictly monitored and measured against the subjective judgment calls of other moderators. A few mistakes are all it takes for a professional moderator to lose their job.[12]

A report detailing the lives of Facebook content moderators explained the poor conditions these workers are subject to [13]. Even though content moderators have an emotionally intense, stressful job they are often underpaid. In addition, Facebook does provide forms of counseling to their employees, however, many are dissatisfied with the service [13]. The employees review a significant amount of traumatizing information daily, but it is their responsibility to seek therapy if needed, which is difficult for many. They are also required to constantly oversee content and are only allotted two 15 minute breaks and a half an hour lunch break. In the cases where they review particularly horrifying content, they are only given a nine minute break to recover [13]. Facebook is often criticized for the ethical treatment of their content moderator employees.

Information Transparency

Information transparency is the degree to which information about a system is visible to its users.[14] By this definition, content moderation is not transparent at any level. First, content moderation is often not transparent to the public, those it is trying to moderate. While a platform may have public rules regarding acceptable content, these are often vague and subjective, allowing the platform to enforce them as broadly or as narrowly as it chooses. Furthermore, such public documents are often supplemented by internal documents accessible only to the moderators themselves.[10]

Content moderation is not transparent at the level of moderators either. The internal documents are often as vague as the public ones and contain significantly more internal inconsistencies and judgment calls that make them difficult to apply fairly. Furthermore, such internal documents are often contradicted by statements from higher-ups, which in turn may be contradicted by similar statements.[12]

Finally, even at the corporate level where policy is set, moderation is not transparent. Moderation policies are often created by an ad-hoc, case-by-case process and applied in the same manner. Some content that would normally be removed by moderation rules will be accepted for special circumstances, such as "newsworthiness". For example, videos of violent government suppression could be displayed or not, depending on the whims of moderation policy-makers and moderation QAs at the time.[10]

Bias

Due to its inherently subjective nature, content moderation can suffer from various kinds of bias. Algorithmic bias is possible when automated tools are used to remove content. For example, YouTube's automated Content ID tools may flag reviews of films or games that feature clips or gameplay as copyright violations, despite being Fair Use when used to criticize[6]. When a youtube content is flagged they lose out on any ad revenue from that video during the time their content is flagged. Even if a content creator is able to fight the claim and has their video unflagged by Youtube they don't receive any of the revenue from their video while it was flagged. The algorithm bias thus serious financial effects for creators and especially for small channel who can't afford to fight the copyright claim [15]. Moderation may also suffer from cultural bias, when something considered objectionable by one group may be considered fine to another. For example, moderators tasked with removing content that depicts minors engaging in violence may disagree over what constitutes a minor. Classification of obscenity is also culturally biased, with different societies around the world having different standards of modesty.[10][16] Moderation, both from the perspective of humans and automated systems, may be inherently flawed in that the subjective nature that comes along with deciding what is right versus what is wrong can be difficult to lay out in concrete terms. While there is no uniform solution to issues of bias in content moderation, some have suggested that approaching these issues with a utilitarian approach may serve as guiding ethical standard. [17]

Free Speech and Censorship

Content moderation often finds itself in conflict with the principles of free speech, especially when the content it moderates is of a political, social or controversial nature.[16]. One the one hand, internet platforms are private entities with full control over what they can allow their users to post. On the other hand, large, dominant social media platforms like Facebook and Twitter have significant influence over the public discourse and act as effective monopolies on audience engagement. The ethical dilemma comes in when discussing who has the right to control what the public has to say and what gives them this right. In this sense, centralized platforms act as a modern day agoras, where John Stuart Mill's "marketplace of ideas" allows good ideas to be separated from the bad without top-down influence.[18] When corporations are allowed to decide with impunity what is or isn't allowed to be discussed in such a space, they circumvent this process and stifle free speech on the primary channel individuals use to express themselves.[19]

Anonymous Social Medias

Social media sites created with the intention of keeping users anonymous so that they may post freely is an ethical concern. Formspring, which is now defunct, was a platform that allowed anonymous users to ask selected individuals their questions. Ask.fm] which is a similar site, has outlived its rival, Formspring. However, a handful of content submitted by anonymous users on these sites are hateful comments that contribute to cyberbullying. There have been two suicides linked to cyberbullying on Formspring.[21][22]. In 2013, when Formspring shut down, Ask.fm began a more active approach at content moderation.

Other, similar anonymous apps include Yik Yak, Secret (now defunct), and Whisper. Learning from their predecessors and competition, YikYak and Whisper have also taken a more active approach at Content Moderation and have not just employed people to moderate content, but also algorithms [23].

Bots

Although a lot of content moderation cannot be dealt with using computer algorithms and must be outsourced to "virtual sweatshops", a lot of content is still moderated through the use of computer bots. The use of these computer bots naturally comes with many ethical concerns [24]. The largest concern lies among academics, an increasing portion of whom are worried that auto-moderation cannot be effectively implemented on a global scale[25] UCLA Professor Assistant Professor Sarah Roberts said in an interview with Motherboard regarding Facebook's attempt at global auto-moderation, "it’s actually almost ridiculous when you think about it... What they’re trying to do is to resolve human nature fundamentally."[26] The article's objective of making clear that auto-moderation isn't feasible includes a report that Facebook CEO Mark Zuckerberg and COO Sheryl Sandberg often have to weigh in on content moderation themselves, a testament to how situational and subjective the job is.[26]

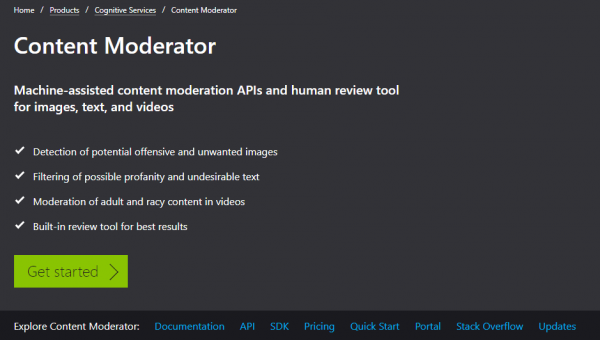

Tech companies such as Microsoft's Azure cloud service have begun offering automated content moderation packages for purchase by companies.[20] The Microsoft Azure content moderator advertises expertise in image moderation, text moderation in over 100 languages that monitors for profanity and contextualized offensive language, video moderation including recognizing "racy" content, as well as a human review tool for situations where the automated moderator is unsure of what to do.[20]

References

- ↑ Grime-Viort, Blaise (December 7, 2010). "6 Types of Content Moderation You Need to Know About". Social Media Today. Retrieved March 26, 2019.

- ↑ Big-8.org. August 4, 2012. "Moderated Newsgroups". Archived from the original on August 4, 2012. Retrieved March 26, 2019.

- ↑ Slashdot. Retrieved March 26, 2019."Moderation and Metamoderation".

- ↑ Reddit. January 18, 2018. Retrieved March 26, 2019."Reddiquette: In Regard to Voting"

- ↑ Goodfellow, Ian; Papernot, Nicolas; et al (February 24, 2017). "Attacking Machine Learning with Adversarial Examples". OpenAI. Retrieved March 26, 2019.

- ↑ 6.0 6.1 Tassi, Paul (December 19, 2013). "The Injustice of the YouTube Content ID Crackdown Reveals Google's Dark Side". Forbes. Retrieved March 26, 2019.

- ↑ Zittrain, Jonathon. "THE INTERNET CREATES A NEW KIND OF SWEATSHOP." NewsWeek. December 7, 2009. https://www.newsweek.com/internet-creates-new-kind-sweatshop-75751

- ↑ Toobin, Jeffrey. “The Solace of Oblivion.” The New Yorker, 22 Sept. 2014, www.newyorker.com/.

- ↑ Duhigg, Charles. “The Case Against Google.” The New York Times, The New York Times, 20 Feb. 2018, www.nytimes.com/.

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedSecret_History - ↑ Chen, Adrian (October 23, 2014). "The Laborers Who Keep Dick Pics and Beheadings Out of Your Facebook Feed" Wired. Retrieved March 26, 2019.

- ↑ 12.0 12.1 12.2 Newton, Casey (February 25, 2019). "The Trauma Floor: The Secret Lives of Facebook Moderators in America" The Verge. Retrieved March 26, 2019.

- ↑ 13.0 13.1 13.2 Simon, Scott, and Emma Bowman. “Propaganda, Hate Speech, Violence: The Working Lives Of Facebook's Content Moderators.” NPR, NPR, 2 Mar. 2019, www.npr.org/2019/03/02/699663284/the-working-lives-of-facebooks-content-moderators.

- ↑ Turilli, Matteo; Floridi, Luciano (March 10, 2009). "The ethics of information transparency" Ethics and Information Technology. 11 (2): 105-112. doi:[https://doi.org/10.1007/s10676-009-9187-9 10.1007/s10676-009-9187-9 ]. Retrieved March 26, 2019.

- ↑ Romano, Aja. “YouTube's ‘Ad-Friendly’ Content Policy May Push One of Its Biggest Stars off the Website.” Vox, Vox, 2 Sept. 2016, www.vox.com/2016/9/2/12746450/youtube-monetization-phil-defranco-leaving-site.

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedGatekeepers - ↑ Mandal, Jharna, et al. “Utilitarian and Deontological Ethics in Medicine.” Tropical Parasitology, Medknow Publications & Media Pvt Ltd, 2016, www.ncbi.nlm.nih.gov/pmc/articles/PMC4778182/.

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedGarbage - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedImpossible_Choices - ↑ 20.0 20.1 20.2 "Content Moderator." Microsoft Azure. https://azure.microsoft.com/en-us/services/cognitive-services/content-moderator/

- ↑ James, Susan Donaldson. “Jamey Rodemeyer Suicide: Police Consider Criminal Bullying Charges.” ABC News, ABC News Network, 22 Sept. 2011, abcnews.go.com/.

- ↑ “Teenager in Rail Suicide Was Sent Abusive Message on Social Networking Site.” The Telegraph, Telegraph Media Group, 22 July 2011, www.telegraph.co.uk/.

- ↑ Deamicis, Carmel. “Meet the Anonymous App Police Fighting Bullies and Porn on Whisper, Yik Yak, and Potentially Secret.” Gigaom – Your Industry Partner in Emerging Technology Research, Gigaom, 8 Aug. 2014, gigaom.com/.

- ↑ Bengani, Priyanjana. “Controlling the Conversation: The Ethics of Social Platforms and Content Moderation.” Columbia University in the City of New York, Apr. 2018, www.columbia.edu/content/.

- ↑ Newton, Casey. "Facebook’s content moderation efforts face increasing skepticism." The Verge. 24 August 2018. https://www.theverge.com/2018/8/24/17775788/facebook-content-moderation-motherboard-critics-skepticism

- ↑ 26.0 26.1 Koebler, Jason. "The Impossible Job: Inside Facebook’s Struggle to Moderate Two Billion People." Motherboard. 23 August 2018. https://motherboard.vice.com/en_us/article/xwk9zd/how-facebook-content-moderation-works