Chatbots in psychotherapy

Chatbots in psychotherapy is an overarching term used to describe the use of machine-learning algorithms or software to mimic human conversation to assist or replace humans in multiple aspects of therapy. Some ethical concerns about the prevalence of chatbots in psychotherapy include the privacy of users and how the data collected is used, the effectiveness of chatbots as a possible substitution to therapy, particularly in minority populations, the question of accountability with an artificial intelligence therapist, and the effects of overdependence on chatbots instead of fostering human relationships.

Artifical Intelligence is intelligence demonstrated by machines, based on input data and algorithms alone. Its primary goal is to perceive its environment and take action that maximizes its goals.[1] As such, different from human intelligence, artificial intelligence can sometimes work as a black box, with little reasoning behind its conclusion.

The primary aim of chatbots in therapy is to (1) analyze the relationships between symptoms exhibited by patients and possible diagnosis, and (2) act as a substitute or addition to human therapists due to the current shortage of therapists worldwide. Companies are developing technology through decreasing therapists overloading and better monitoring of patients.

As chatbot use in clinical therapy is still relatively new, some ethical concerns have arisen on the matter.

History

The idea of artificial intelligence stems from the study of mathematical logic and philosophy. The first theory that suggests a machine can simulate any kind of formal reasoning is the Church-Turing thesis, proposed by Alonzo Church and Alan Turing. Since the 1950s, AI researchers explored the idea that any human cognition can be reduced to algorithmic reasoning, and had based research in two main directions. The first is creating artificial neural networks, systems that model the biological brain. The second is developing symbolic AI (also known as GOFAI)[2] systems that are based on human-readable representations of problems solved by logic programming from the 1950s to the 1990s, before shifting into focus on subsymbolic AI due to technical limitations.

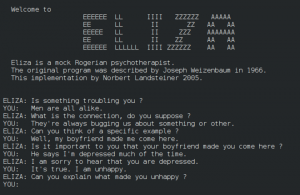

The first documented research of chatbots in psychotherapy is the chatbot ELIZA[3], developed from 1964 to 1966 by Joseph Weizenbaum. Eliza is created to be a pseudo Rogerian therapist that simulates human conversations. ELIZA was written in SLIP and trained on primarily the DOCTOR script that simulated interactions Carl Rogers has with his patients — notably repeating what the patient has said back at them. While ELIZA had been primarily developed to put emphasis on the superficial interactions between AI and humans and was not aimed to perform recommendations to patients, Weizenbaum observed that many believed the robot understood them.[3]

In the 1980s, psychotherapists started to investigate the usage of artificial intelligence clinically[5], primarily the possibilities of using logic based programming in quick intervention methods, such as in brief cognitive and behavioral therapy (CBT). This kind of therapy does not focus on the underlying causes of mental health ailments, rather, it triggers and supports a change in behavior and cognitive distortions.[6] However, technical limitations such as the lack of sophisticated and development of logical systems, and the lack of breakthrough in artificial intelligence technology, as well as the decrease in funding in AI technology by the Strategic Computing Initiative had led research in this field to stagnate until the mid 1990s when the internet became accessible to the general public.[7] The increased usage of the internet in the 21st century and the long wait for therapy and other mental health services[8] led to chatbot psychotherapy to be frequently used as an alternative personal check-in tool.

Currently, artificial intelligence is becoming increasingly widespread in psychotherapy, with developments focusing on data logging and building mental models of patients.

Design and development

Three concepts are highlighted as the main considerations for the construction of a chatbot: intentions (what the user demands or the purpose of the chatbot), entities (what topic the user wants to talk about) and dialogue (the exact wordings the bot use)[9]. Most psychologist-chatbots are similar in the latter two categories, differing in their intentions. The chatbots can be separated into two main categories: chatbots built for psychological assessments, and chatbots built for help interventions.

Types of chatbots

Depending on their usage, the dialogue of therapeutic chatbots, and the response by users it can accept can be separated into three categories.

Option-based response

Option-based responses are common with chatbots In chatbots built for psychological assessments or other logistic concerns, such as scheduling appointments. The chatbot is either trained with or hard-coded in a pre-assessment interview that asks for demographic information about the patient, including age, name, occupation, gender. These chatbots act more like virtual assistants, and users are explicitly warned to use concise language for the virtual assistant to recognize entities with more accuracy.[10]

Sentinobot

Sentino API is a “data-driven, AI-powered service built to provide insights into one’s personality”[11] developed in 2016. The bot version takes two versions of inputs. Users can either type their own sentences starting with “I” (such as “I am an introvert”) or type the command “test me” to lead users over taking a Big Five Personality Test (also known as OCEAN). Other inputs unrelated to personality assessment give a list of coded responses. If the test is selected, users are given statements (30 in each group) which they can respond with a five option scale of “disagree”, “slightly disagree”, “neutral”, “slightly agree” and “agree”.

The user is then sent a link to access their profiles. For each group 30 questions the user answers, the user is given a new section of their profile. As of January 2022, there are 4 sections — “Big 5 Portrait”, “10 Factors of Big5”, “30 Facets”, and “Adjective Cloud”.[12]

Unlike most AI psychotherapists, Sentinobot does not help with mental health intervention, and only serves as an alternative way to conduct a personality test.

Toolkit based

Toolkit based chatbots use brief CBT to focus on quick intervention. They follow a script to ask users questions about their mood and to guide them towards a personal understanding of intervention methods. While the format of dialogue is in chat, the chatbots’ primary goal is not to have a conversation with the user. Instead, quick check-ins are emphasised, and the chatbot uses the information to suggest a method of intervention by referring the user to a specific pre-made toolkit. Further text-based instructions or videos made by human psychotherapists are accompanied by the chatbot function. The purpose of these chatbots is not to build connections between humans and chatbots but to “learn skills to manage emotional resilience”.[13]

Woebot

Released in 2017, Woebot Health is founded by clinical research psychologist Alison Darcy. The bot describes itself less of a human replacement and more of a “choose-your-own-adventure self help book”[14], focusing on developing the users’ goal setting, accountability, motivation and engagement and reflection to their mental health, and notes that the app is not a replacement for therapy. Each interaction in Woebot begins with a general mental health enquiry, where users can respond with either simple options containing a few words or emojis, or via text. Mood data is gathered with follow up questions, after which Woebot presents the user with a number of toolkits to teach users about cognitive distortions. The user attempts to answer which (if any) cognitive distortions they are using, after which Woebot guides the user into rephrasing their thoughts.[15]

Woebot is primarily coded on a decision tree and accepts options as inputs, but it also picks up keywords from natural language text-based input to determine where the conversation should next go[14]. While the chatbot does not engage in conversation, the bot expresses empathy upon recognising keywords related to the users’ struggles (for example, responding with “I’m sorry to hear that. That must be very tough for you.”), and its style of dialogue is modeled on “human clinical decision making and dynamics of social discourse” [14].

Conversational agents

Conversational chatbots resemble the speech of a human being[16] and aim to act as substitute or additional therapist to the client. These chatbots aim to foster a strong emotional relationship between the human and the bot[17], or to ease people who are more comfortable with confiding in an avatar into therapy.

ELIZA

Eliza is developed in the MIT Artificial Intelligence lab by Joseph Weizenbaum and is the first chatbot. It is not made for clinical use, but its conversational style imitates the Rogerian therapy style, also known as person centered therapy. This is a type of therapy where the client directs the conversation. The therapist does not try to interpret or judge anything, only restating their ideas to clarify their thoughts.[18]

Eliza processes input using the basic natural language processing procedure of looking for keywords and then ranking them, then outputting a response that is categorized in the topic. For example, if the keyword “alike” is detected, Eliza will categorize it as a conversation on the topic of similarity, then respond “in what way?” Otherwise, Eliza also breaks down user input into small segments and rephrase them by changing the subject to restate the idea (for example, “I am happy” as an user input might generate the response of “you are happy”) or ask a probing question (outputting “what makes you happy?”)[3]

By design, Eliza does not save contextual evidence of the conversation, taking input on a surface level.

Ellie

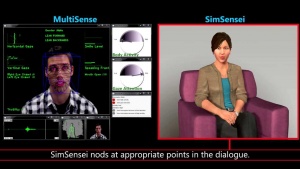

Ellie is modelled off Eliza and developed by the Institute of Creative Technology at the University of Southern California.[19] Ellie is designed to detect symptoms of depression and post-traumatic stress disorder (PTSD) through a conversation with a patient, and acts as a “decision-support tool” that helps therapists make decisions about diagnosis and treatment.

Ellie gathers information through both verbal and non-verbal content of the conversation, including body language, facial movements and intonations in speech and reacts accordingly using Multisense[21] technology. The symptoms are noted via comparison of the patient’s input and a database with both civilian and military data.[20]

Replika

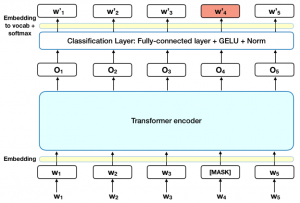

Replika was developed by Luka and started off as a “companion friend” that has since incorporated psychotherapy training toolkits. Replika is trained by a set of pre-determined and moderated sentences, then run through GPT-3 and BERT models to generate new responses. A feedback loop is created by users reacting to the messages Replika sends via either upvotes and downvotes, emojis, or direct text messages. Facial recognition and speech recognition is also implemented, and users can send Replika images and call Replika outside of texting.[22]

While it is advertised as a therapeutic tool on the App Store, Replika’s website and in-app persona describes itself as a companion to the user, and is meant to be the user’s friend instead of a therapist.

Ethical concerns

Data collection and privacy

In order to train artificial intelligence machines like chatbots, mass amounts of data must be gathered to improve on the algorithm, or in some cases, to create a contextual conversation with the user. However, trust is built on confidentiality and privacy, and is “fundamental to therapeutic relationships”.[23]

The collection of such data introduces surveillance to a usually confidential conversation, and many therapeutic sessions involve discussing sensitive information with identifiable information. For example, Woebot offers conversation via Facebook Messenger, and the conversation, along with the user’s identifiable information, is collected by Facebook. Replika’s recent development blog[24] states that it uses user feedback to improve on the algorithm.

However, users are often not explicitly informed of the usage of their information. While client confidentiality is protected by state laws in the USA, this does not apply to chatbot therapy. Patients do not have the guidance of a healthcare professional, and “may not be aware that the protections for privacy or confidentiality that apply in a professional therapeutic interaction likely do not apply to the individual's usage of the direct-to-consumer application”[25] when they assume the chatbot is a substitute to therapy.

According to research, most apps only describe the primary use of collected data, and most do not disclose the transmission of data to third-parties in their privacy policy[26]. Most chatbots also do not offer privacy or confidentiality information in conversations, with the information only being offered on the development website.[25]

Efficacy and accountability

The effectiveness and efficacy of a therapist is often evaluated by the therapist’s competence, their personal connection with the patient and the patient’s trust towards the therapist.[27] Many chatbots are advertised as “mental health apps” on the market, but little filtering of harmful or misinformation is done.[27] According to a study by Larsen, in 1435 apps that promote addressing depression, 38% claim to be effective but only 2.6% provide scientific backing to their claims.[28] Due to the lack of monitoring, some applications includes misleading information about mental health conditions or engage in bad practice, such as encouraging risky behavior as an alternative to suicide. Vulnerable users who believe the mental health advice given to them will be diverted from receiving effective treatment or directly harmed by the advice.[27] Many chatbots also describe themselves as an addition and not a substitute for therapy, but advertise themselves as “therapy bot”, giving the false illusion that they are a “cheaper alternative to therapy”.

Chatbots rely on natural language processing (NLP) to generate their responses. Natural language processing is the programming of computers to understand human conversational language. In the past (such as in early cases such as ELIZA or AliceBot (which stands for Artificial Linguistic Internet Computer Entity) )[3] these tools were mostly developed on preconfigured templates with human hard-coding. They had no framework in contextualizing any input and struggled with following a coherent conversation.[3]

As such, they only pick out keywords and do not know how to diffuse situations like a human therapist. Chatbots cannot “grasp the nuances of users’ life history and current circumstances that may be at the root of mental health difficulties”.[25] If a user sends a complex message, they may only respond that they did not understand, or provide a response that is off-topic and may come across as dismissive. Chatbots also have difficulty managing recurring conversations or past topics the users mentioned.[29] The competence of the chatbot therapist falls through when they cannot support the user, and may “undermine the user’s sense that the chatbot is listening”[27]. It is also unclear how chatbots can protect user’s safety in emergency situations, and how they can react to such situations.

Representation

Chatbots are trained on natural language processing tools, such as GPT-3 for response paragraphs and ELMO and BERT for feature extractions. However, bias in the data that trains these tools will lead to a bias in conversation. Word embedding framework commonly used in natural language processing tasks, particularly in programs such as ELMO and BART that build chatbot tools pick up on preexisting stereotype gender and race bias, even when trained with primarily impartial sources like Google News.[30] For example, the word “receptionist” is often linked with “female”. White males are also most represented in medical data sets[31] and on the internet, and as such most data is trained on standardized American English sets since they are the most common on the internet.

Current NLP systems fail to deal with many linguistic variations and respond better to standard varieties of language, particularly Standardized English, and often fail to understand other dialects, such as African American Vernacular English (AAVE)[34]. Chatbots may also pick up on internet slang and popular culture references that are not understandable to everyone or may even be offensive to some populations. In terms of content, chatbots are also more competent in elaborating on topics that are interests of the white male majority, such as video games.

The lack of effectiveness of chatbots for minorities due to these discrepancies further disproportionately affects the accessibility of therapy of minorities.

The ELIZA effect

The ELIZA Effect is a form of anthropomorphisation where humans unconsciously assume computers act like humans and have human intelligence. An example of this is a machine saying “thank you” at the end of a transaction, leading users to believe that it actually knows how to express gratitude.[35] This phenomenon is first observed by Eliza creator Joseph Weizenbaum, who notes that research assistants who know that Eliza is completely coded quickly developed an affinity towards her and confided in her like a human being.

Given the interactive nature of therapy, most artificial intelligence tools developed around psychology are based on the Turing Test. The test was proposed in Alan Turing’s 1950 Computing Intelligence and Machinery paper, asking the question “can machines think?”[36]

The paper puts forward the idea that if a human cannot discern between the answers a machine makes and a human’s, the machine is effectively intelligent, regardless of how it came to its conclusions. While the paper was written as a thinkpiece to evaluate the ability the computational power of machines, many chatbot designers has since used some variations of the Turing test as a benchmark of proficiency as “machine must be able to mimic a human being to build trust with the patient and for the patient to be willing to talk to the chatbot”[10].

When the chatbot acts mostly like a human, but does not always immediately offer the information that they are a computer and not a human, users may not realize or forget that they are not talking to a human being in a therapy session, and get thrown off when the chatbot does not respond adequately.[27]

Patients seeking therapy are often under distress, and such situations ease “the attachment to social chatbots if they perceive the chatbots’ response as offering emotional support and psychological security”.[37] Patients may form a dependent relationship with the chatbot, often identifying themselves as the caregiver of the chatbot and feeling responsible for the chatbot’s “emotions”, even if they rationally understand that the chatbot does not feel. This emotional burden may cause the patients to struggle further, or get annoyed by the chatbot after a period of time.

People have also noted that the attachment users may have on chatbots due to this effect may also lead to an over-reliance on the technology and cause further isolation from other real-life connections if they believe that the chatbot is the only companion they need, worsening their mental health problems.[37]

References

- ↑ Russell, S., and Norvig, P. (2009). Artificial Intelligence: A Modern Approach, 3rd Edn. Saddle River, NJ: Prentice Hall.

- ↑ Haugeland, J. (1980). Psychology and computational architecture. Behavioral and Brain Sciences, 3(1), 138–139. https://doi.org/10.1017/s0140525x00002120

- ↑ 3.0 3.1 3.2 3.3 3.4 Weizenbaum, J. (1966b). ELIZA—a computer program for the study of natural language communication between man and machine. Communications of the ACM, 9(1), 36–45. https://doi.org/10.1145/365153.365168

- ↑ ELIZA conversation. (n.d.). [Photograph]. https://commons.wikimedia.org/wiki/File:ELIZA_conversation.png

- ↑ Glomann, L., Hager, V., Lukas, C. A., & Berking, M. (2018b). Patient-Centered Design of an e-Mental Health App. Advances in Intelligent Systems and Computing, 264–271. https://doi.org/10.1007/978-3-319-94229-2_25

- ↑ Benjamin, C. L., Puleo, C. M., Settipani, C. A., Brodman, D. M., Edmunds, J. M., Cummings, C. M., & Kendall, P. C. (2011b). History of Cognitive-Behavioral Therapy in Youth. Child and Adolescent Psychiatric Clinics of North America, 20(2), 179–189. https://doi.org/10.1016/j.chc.2011.01.011

- ↑ McCorduck, P. (2004). Machines Who Think (2nd ed.). Routledge.

- ↑ Williams, M. E., Latta, J., & Conversano, P. (2007). Eliminating The Wait For Mental Health Services. The Journal of Behavioral Health Services & Research, 35(1), 107–114. https://doi.org/10.1007/s11414-007-9091-1

- ↑ Khan, R., & Das, A. (2017). Build Better Chatbots: A Complete Guide to Getting Started with Chatbots (1st ed.). New York: Apress

- ↑ 10.0 10.1 Romero, M., Casadevante, C., & Montoro, H. (2020). CÓMO CONSTRUIR UN PSICÓLOGO-CHATBOT. Papeles Del Psicólogo - Psychologist Papers, 41(1). https://doi.org/10.23923/pap.psicol2020.2920

- ↑ Sentino API - personality, big5, NLP, psychology analysis. (2016). Sentino. https://sentino.org/api

- ↑ Sentino project - Explore yourself. (n.d.). Sentino Profile. https://sentino.org/profile

- ↑ Wysa: Frequently asked questions. (n.d.). Wysa. https://www.wysa.io/faq

- ↑ 14.0 14.1 14.2 Fitzpatrick, K. K., Darcy, A., & Vierhile, M. (2017). Delivering Cognitive Behavior Therapy to Young Adults With Symptoms of Depression and Anxiety Using a Fully Automated Conversational Agent (Woebot): A Randomized Controlled Trial. JMIR Mental Health, 4(2), e19. https://doi.org/10.2196/mental.7785

- ↑ Woebot Health. (2022, January 31). Relational Agent for Mental Health. https://woebothealth.com/

- ↑ Hussain, S., Ameri Sianaki, O., & Ababneh, N. (2019). A Survey on Conversational Agents/Chatbots Classification and Design Techniques. Advances in Intelligent Systems and Computing, 946–956. https://doi.org/10.1007/978-3-030-15035-8_93

- ↑ Patel, F., Thakore, R., Nandwani, I., & Bharti, S. K. (2019). Combating Depression in Students using an Intelligent ChatBot: A Cognitive Behavioral Therapy. 2019 IEEE 16th India Council International Conference (INDICON).

- ↑ Greenfield, D. P. (2004). Book Section: Essay and Review: Current Psychotherapies (Sixth Edition). The Journal of Psychiatry & Law, 32(4), 555–560. https://doi.org/10.1177/009318530403200411

- ↑ Tweed, A. (n.d.). From Eliza to Ellie: the evolution of the AI Therapist. AbilityNet. https://abilitynet.org.uk/news-blogs/eliza-ellie-evolution-ai-therapist

- ↑ 20.0 20.1 Robinson, A. (2017b, September 21). Meet Ellie, the machine that can detect depression. The Guardian. https://www.theguardian.com/sustainable-business/2015/sep/17/ellie-machine-that-can-detect-depression

- ↑ MultiSense - VHToolkit - ~Confluence~Institute~for~Creative~Technologies. (n.d.). Multisense. https://confluence.ict.usc.edu/display/VHTK/MultiSense

- ↑ Gavrilov, D. (2021, October 21). Building a compassionate AI friend. Replika Blog. https://blog.replika.com/posts/building-a-compassionate-ai-friend

- ↑ Birkhäuer, J., Gaab, J., Kossowsky, J., Hasler, S., Krummenacher, P., Werner, C., & Gerger, H. (2017). Trust in the health care professional and health outcome: A meta-analysis. PLOS ONE, 12(2), e0170988. https://doi.org/10.1371/journal.pone.0170988

- ↑ Gavrilov, D. (2021b, October 21). Building a compassionate AI friend. Replika Blog. https://blog.replika.com/posts/building-a-compassionate-ai-friend

- ↑ 25.0 25.1 25.2 Kretzschmar, K., Tyroll, H., Pavarini, G., Manzini, A., & Singh, I. (2019). Can Your Phone Be Your Therapist? Young People’s Ethical Perspectives on the Use of Fully Automated Conversational Agents (Chatbots) in Mental Health Support. Biomedical Informatics Insights, 11, 117822261982908. https://doi.org/10.1177/1178222619829083

- ↑ Huckvale, K., Torous, J., & Larsen, M. E. (2019). Assessment of the Data Sharing and Privacy Practices of Smartphone Apps for Depression and Smoking Cessation. JAMA Network Open, 2(4), e192542. https://doi.org/10.1001/jamanetworkopen.2019.2542

- ↑ 27.0 27.1 27.2 27.3 27.4 Chandrashekar, P. (2018). Do mental health mobile apps work: evidence and recommendations for designing high-efficacy mental health mobile apps. mHealth, 4, 6. https://doi.org/10.21037/mhealth.2018.03.02

- ↑ Larsen, M. E., Huckvale, K., Nicholas, J., Torous, J., Birrell, L., Li, E., & Reda, B. (2019). Using science to sell apps: Evaluation of mental health app store quality claims. Npj Digital Medicine, 2(1). https://doi.org/10.1038/s41746-019-0093-1

- ↑ Grudin, J., & Jacques, R. (2019). Chatbots, Humbots, and the Quest for Artificial General Intelligence. Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems. https://doi.org/10.1145/3290605.3300439

- ↑ Bolukbasi, T. (2016). Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings. NIPS’16: Proceedings of the 30th International Conference on Neural Information Processing Systems, 4356–4364. https://arxiv.org/abs/1607.06520

- ↑ Nordling, L. (2019). A fairer way forward for AI in health care. Nature, 573(7775), S103–S105. https://doi.org/10.1038/d41586-019-02872-2

- ↑ Horev, R. (2018, November 17). BERT Explained: State of the art language model for NLP. Medium. https://towardsdatascience.com/bert-explained-state-of-the-art-language-model-for-nlp-f8b21a9b6270

- ↑ Devlin, J. (2018, October 11). BERT: Pre-training of Deep Bidirectional Transformers for Language. . . arXiv.Org. https://arxiv.org/abs/1810.04805

- ↑ Oxford Insights. (2019, August). Racial Bias in Natural Language Processing.

- ↑ Hofstadter, Douglas R. (1996). "Preface 4 The Ineradicable Eliza Effect and Its Dangers, Epilogue". Fluid Concepts and Creative Analogies: Computer Models of the Fundamental Mechanisms of Thought. Basic Books. p. 157. ISBN 978-0-465-02475-9.

- ↑ TURING, A. M. (1950). I.—COMPUTING MACHINERY AND INTELLIGENCE. Mind, LIX(236), 433–460. https://doi.org/10.1093/mind/lix.236.433

- ↑ 37.0 37.1 Xie, T., & Pentina, I. (2022). Attachment Theory as a Framework to Understand Relationships with Social Chatbots: A Case Study of Replika. Proceedings of the Annual Hawaii International Conference on System Sciences. https://doi.org/10.24251/hicss.2022.258