Bot

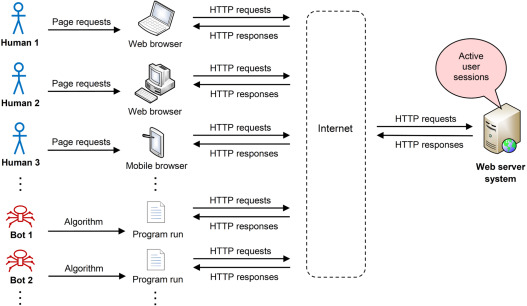

A bot (or, more specifically, an Internet bot) is a computer program that performs automated tasks on top of a network (commonly the Internet).[2] Bots carry out repetitive and predefined tasks, often in a manner that mimics human behavior.[3] Due to their automated nature, bots can complete tasks more efficiently and at a larger scale than humans. Compared with other software applications, the time needed to develop and deploy bots (especially those that do not converse with online users) is relatively short.[2] Consequently, bots hold a significant presence on the Internet and power core components of the modern Web, such as search engines. In light of this ability, however, bots are frequently utilized to execute a variety of malicious activities.

Contents

History

Turing test

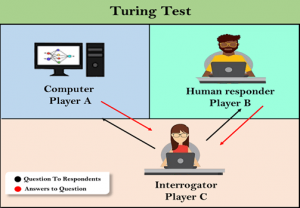

The notion of a bot dates back to 1950, when Alan Turing created the imitation game, later named the Turing test.[5] The test, or game, revolves around three players: two humans and one computer.[6] One of the human players, the interrogator, is isolated and inputs questions into a computer. The questions are structured in a particular format and a limited number are asked. The other human player and the computer player answer the questions asked. As the game proceeds, the interrogator uses the answers to determine which of the other players is the human and which is the computer. The computer player attempts to answer questions in a manner that imitates humans and is said to have passed the Turing test if the interrogator falsely concludes that it is the other human player. The computer player in this sense is thus seen as a representation of a bot.

Early bots

The computer player used in the Turing test closely resembles a chatbot, one that interacts with humans via dialogue.[7] Over the next few decades following the inception of the Turing test, the development of chatbots grew significantly. During the 1960s, MIT professor Joseph Weizenbaum created ELIZA, a bot that utilizes natural language processing (NLP) to converse with humans. Overall, the original version of ELIZA was quite limited in terms of functionality and ability to hold conversations, but it is nevertheless remembered as being one of the first true instances of a bot.[5]

Following ELIZA, several chatbots emerged with varying behavior and oftentimes enhancements to preceding chatbots. These include PARRY (1972), Jabberwacky (1988), Dr. Sbaitso (1991), and ALICE (1995).[8] Many of the improvements made to chatbots over time are a result of advances in communication technologies, such as instant messaging (IM) and Internet Relay Chat (IRC).[9]

Web era

With the development of the Web, the usage and capabilities of bots expanded beyond chatting.[10] In particular, web crawlers, which are bots used by search engines to index web pages, arose during the 1990s. The first web crawler was developed in 1994 by Brian Pinkerton, a student at the University of Washington. Pinkerton’s web crawler led to the first search engine capable of text search, WebCrawler.[11] A few years later, in 1997, Google created its first web crawler, BackRub (now known as Googlebot).[10]

As the Web and Internet have grown, so have the roles and functions of bots. Bots are currently being used in several contexts, including online shopping, website monitoring, and social media.[3]

Design

Components

Like most software applications, bots can be designed in several ways; however, there are a few underlying components that most designs share. These include application logic, databases, and external integrations.[2] Application logic is the code that drives a bot (i.e., the program for a bot). It determines a bot’s behavior and actively interacts with any databases or external integrations that a bot utilizes. Databases store data, which may be pre-existing (before a bot runs) or created as a bot runs. Pre-existing data can help guide a bot’s decisions. Newly created data can be processed for purposes independent of a bot’s functionality, as is the case with web crawlers. External integrations, which usually occur through an application programming interface (API), enable a bot to utilize services provided by another party (e.g., Twitter). Such integrations can help prevent a bot’s developer from writing complicated code needed to perform a task (e.g., posting on Twitter).[12]

In cases where a bot requires artificial intelligence (AI), NLP or machine learning (ML) are oftentimes utilized.[13] This can be in a bot’s application logic directly or through an external integration.

Botnets

In the general sense, a botnet is a network of computers connected through the Internet.[15] Each device in the network is a bot, or, rather, runs any number of bots, and the botnet works to accomplish a specific task. Communication in early botnets occurred through IRC.

Although botnets can be used for well-founded purposes, such as ensuring a website remains alive, they are oftentimes used for malicious purposes.[16] This occurs when a remote party, the bot herder, penetrates through the security defenses of several computers and installs malware on them.[17] Once the malware has been installed, the bot herder can effectively run bots on the infected devices to carry out malicious operations, such as spamming.

In terms of architecture, botnets can be organized in a wide array of arrangements. Common models used to construct botnets include client-server, star network, hierarchical network, and peer-to-peer.[17]

Types of bots

This is a non-exhaustive list.

Social media bots

A social media bot is one that interacts with a social media network.[18] Actions that such bots may perform vary depending on the specific platform being operated on, but common functions include posting content, following users, attempting to gain followers, and liking or commenting on posts. Social media bots, like chatbots, are typically designed to imitate human behavior; however, they differ from chatbots in that they normally do not have the ability to maintain conversations with other platform users.[12] Additionally, social media bots tend to be much easier to manage than chatbots, and thus they can be deployed at a much larger scale. Indeed, according to a study conducted by the University of Southern California and Indiana University in 2017, up to 15% of Twitter accounts are controlled by bots.[19]

Web crawlers

A web crawler, or spider, is a type of bot that traverses the Web with the intent of discovering a large subset of all web pages and the content they have.[20] Web crawlers are mostly utilized by search engine companies so that they can provide relevant search results to users. A web crawler begins with an initial set of pages that it will crawl. It then uses the hyperlinks on those initial pages to discover new pages, which are then traversed in a likewise manner to discover more pages. While crawling, a web crawler downloads and indexes the pages that it comes across. Web crawlers do not crawl every single page on the Web.

Chatbots

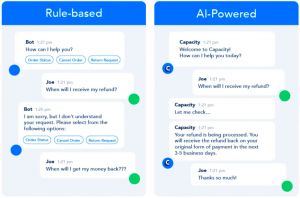

A chatbot is a software application that holds conversations with humans by imitating their dialogue.[22] Chatbots may converse with humans through text or voice. Though functionality can vary tremendously among them, chatbots can be classified into two primary subtypes: rule-based chatbots and AI chatbots. A rule-based chatbot is governed by a predefined set of rules (e.g., a table mapping keywords to output) that dictates how it should respond to human input. An AI chatbot, on the other hand, utilizes ML to understand a human language and provides responses to human input based on the understanding that it develops.

Examples of bots

Googlebot

Googlebot is the web crawler used by Google to build its search engine.[20] More precisely, Googlebot refers to two distinct types of web crawlers used by Google, one of which is used to imitate a desktop user (Googlebot Desktop) and the other of which is used to imitate a mobile user (Googlebot Smartphone).[23] On average, Googlebot will not crawl a particular website more than once every few seconds. Googlebot was designed with performance and scalability in mind. As a result, thousands of Google’s machines are running instances of Googlebot concurrently. Additionally, in order to reduce bandwidth consumption, numerous Googlebot instances are run on machines situated closely to servers hosting websites that are likely to be crawled. Currently, Googlebot utilizes version 1.1 of the Hypertext Transfer Protocol (HTTP) to crawl websites.

Wikipedia bots

A Wikipedia bot is a type of software bot that serves to maintain the more than 55 million articles on the English Wikipedia.[24] There are over 2,500 Wikipedia bots that have been approved for use. Previously, Wikipedia bots were used to quickly create a large number of articles; however, such mass-creation is now restricted due to Wikipedia’s bot policy. The bot policy was developed due to concern over technical disruptions that can occur on Wikipedia when bots are not properly designed. The function and scope of Wikipedia bots range quite tremendously. Common tasks carried about bots include editing, adding content, and archiving, all with respect to articles. Some specific Wikipedia bots include ClueBot NG (reverts vandalism), AnomieBOT (delivers alerts to WikiProjects), and InternetArchiveBot (mitigates link rot).

Intercom Custom Bots

Custom Bots are a chatbot solution developed by Intercom.[25] They are designed to help companies scale their sales, marketing, and customer support efforts. The chatbots are customizable and can thus be extended to add company-specific features. A Custom Bot is typically deployed directly on a company’s website in order to engage with visitors. It initiates conversations by asking targeted questions that help gain insight into a visitor’s needs or concerns. By this means, Custom Bots assist companies in efficiently converting website visitors into customers and resolving existing customer requests. Various enterprise services, including those offered by Salesforce, GitHub, and Stripe, can be integrated with a Custom Bot.[26]

Traffic and management

Traffic

Bots account for a significant portion of total Internet traffic.[28] Imperva’s annual Bad Bot Report found that bots accounted for 40.8% of all traffic in 2020, a 5.7% increase from the previous year. Of this 40.8%, 25.6% came from malicious (bad) bots, while the rest was due to harmless (good) bots. The majority of bad bots utilize moderate to sophisticated persistence mechanisms that make them hard to detect and combat. Moreover, bad bots account for traffic across several industries. The telecommunications, information technology, sports, news, and business industries have bad bots accounting for 45.7%, 41.4%, 33.7%, 33%, and 29.7% of their total traffic, respectively. The percentage of bad bots utilizing mobile user agents is currently on the rise, with 28.1% of all bad bot traffic originating from mobile browsers. In terms of global presence, 40.5% of bad bot traffic originates from the United States, followed by China (5.2%), the United Kingdom (4.9%), Russia (3.9%), and Japan (3.4%).

Management

CAPTCHA

A Completely Automated Public Turing test to tell Computers and Humans Apart (CAPTCHA) is a security check that aims to discern between bots and human users.[29] The objective of a CAPTCHA is to provide a task that is easy for humans to complete but difficult for an automated process, like a bot, to complete.[27] A website may utilize a CAPTCHA for a number of reasons, including limiting the creation of fake accounts, preserving poll accuracy, and reducing false comments. There are several types of CAPTCHAs, such as text-based (e.g., distorted alphanumeric sequence), image-based (e.g., select all images that match a theme), and checkbox (e.g., “I’m not a robot”). One popular CAPTCHA system is reCAPTCHA.

Robots.txt

The robots exclusion protocol is an Internet protocol used to limit the accessibility of bots (primarily web crawlers).[30] Web servers host a file named robots.txt (normally in the root directory of the server) that specifies which parts of a website should not be accessed by bots, along with other instructions for them to follow. The instructions contained in a robots.txt file cannot actually be enforced by a website. Rather, the intent is that good bots will follow them in order to avoid being a burden on a website. It is therefore unlikely that the presence of a robots.txt file will control the activity of malicious bots.

Ethical concerns

Distributed denial-of-service attacks

A distributed denial-of-service (DDoS) attack is a type of DoS attack in which a substantial amount of traffic is generated on a service by a distributed collection of attackers, typically a botnet, in hopes of overwhelming the service.[31] As the name suggests, a successful DDoS attack can result in a service becoming unavailable to a significant portion of the service’s userbase. A DDoS attack differs from a DoS attack in that the former creates numerous connections to a target service while the latter only creates one. In order to generate many connections, a botnet is normally utilized. For example, the 2016 DDoS attack on Dyn, which disrupted services such as Airbnb, Netflix, and Amazon, was carried out through a botnet created by installing Mirai malware on a large number of devices.[32] DDoS attacks can occur at different layers of the Open Systems Interconnection (OSI) model, particularly the application layer (layer 7) and the network layer (layer 3).[33] DDoS attacks that occur at the application layer often focus on exhausting a service's resources. Application layer DDoS attacks include HTTP floods and DNS query floods. DDoS attacks that occur in the network layer frequently aim to create congestion in a service’s network pipelines. Network layer DDoS attacks include ping floods and Smurf attacks.[34]

Spamming

Spamming is the use of an online communication channel to deliver unsolicited messages (spam) on a large scale.[35] Spam usually consists of advertisements, backlinks, or malware downloads.[36] A spambot is a type of bot designed to facilitate spamming.[37] Spambots operate on a variety of platforms, including social media, online forums, email services, and messaging apps.[36] In order to generate and deliver spam, a spambot needs to create an account on its target platform. This, however, is a fairly simple task to automate, and workarounds exist for common security defenses, such as CAPTCHAs. In the specific case of sending email spam, a spambot needs to harvest a large number of email addresses to which it can deliver spam. One way to obtain a lengthy email address list is by utilizing a web scraping bot, which can download web pages and then scan them for specific patterns (e.g., an “@” followed by a domain) that match email addresses. Another, perhaps more simple method, is to purchase email address lists on the dark web. Spambots that operate on messaging apps typically can hold rudimentary conversations with any users that respond to them and thus parallel very primitive chatbots.

Scalping

In the context of bots, scalping refers to the acquisition of a highly-demanded or limited-availability product in hopes of reselling it for a profit.[28] Bots are used to make initial transactions because timing margins are narrow and an automated process can carry out transactions much more quickly than a human.[38] Historically, scalping has focused on industries such as tickets (sporting events and concerts) and clothing (limited releases, such as exclusive sneaker drops).[28] During the COVID-19 pandemic, however, scalping shifted towards new markets, in large part because many live-audience events were canceled. Specifically, scalping bots were used to target items such as face coverings, workout equipment, hand sanitizers, and others that became highly demanded as public spaces closed and people were encouraged to control and prevent the spread of COVID-19. Scalping activity is most popular on retail websites during the holiday season, when scalpers anticipate gifts and products that will be in high demand. As a result, scalping bots are referred to as “Grinchbots” during the holiday season. Grinchbots targeted the gaming industry heavily during 2020, as scalpers quickly depleted the supply of gaming hardware such as GPUs, CPUs, and next-generation video game consoles.

Misinformation spread

Misinformation spread pertains to the dispersion of false or misleading information.[39] It includes the circulation of fake news, conspiracy theories, and other types of inaccurate information, regardless of whether or not it is intentionally deceiving. Bots, particularly social media bots, play a significant role in spreading misinformation.[40] Social media platforms, such as Twitter and Facebook, are targeted destinations for bots aiming to disseminate information because they enable information to propagate quickly and reach a wide-ranging audience. Bots have had a notable impact in spreading misinformation during several far-reaching events, including the COVID-19 pandemic and the 2016 United States presidential election.[39][41]

A bot can determine the most relevant content to spread by taking into consideration trending topics on social media.[40] Such topics can easily be discovered by bots as most social media platforms make them accessible to their users. Once a bot is aware of a popular or widely-discussed matter, it can search the Web and retrieve content related to it. Following this, a bot can post the content, or portions of it, to social media. Misinformation spread can occur in this process because the content retrieved by a bot may not be curated or verified.

Credential stuffing

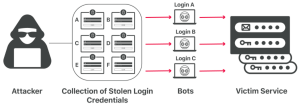

Credential stuffing is a type of cyberattack wherein compromised login credentials from one service are used in an attempt to gain unauthorized access to accounts on another service.[42] The underlying assumption of such an attack is that people frequently reuse username and password pairs (or other forms of account credentials) across different services. This allows an attacker to potentially acquire sensitive information, such as credit card numbers, from various sources. Stolen collections of username and password pairs usually stem from data breaches and can be obtained from an online black market. Bots factor into a credential stuffing attack during the process of breaching a service, or multiple services, with a list of compromised account credentials.[43] They assist in scaling and automating an attack, which is necessary due to the considerable number of credentials often involved. More specifically, a bot executing a credential stuffing attack leverages parallel computing to attempt to log in to several accounts on a service simultaneously. It tracks successful logins as it executes and, to get around security defenses, may spoof its IP address on each login attempt to make it appear that each attempt is from a different device. This process may then be repeated on other services.

See also

References

- ↑ Suchacka, G., & Iwański, J. (2020). Identifying legitimate web users and bots with different traffic profiles — An information bottleneck approach. Knowledge-Based Systems, 197, 3. https://doi.org/10.1016/j.knosys.2020.105875

- ↑ 2.0 2.1 2.2 Cloudflare. (n.d.). How is an internet bot constructed? Cloudflare. Retrieved January 20, 2022, from https://www.cloudflare.com/learning/bots/how-is-an-internet-bot-constructed/

- ↑ 3.0 3.1 Kaspersky. (2021, March 22). What are bots? – Definition and explanation. Kaspersky. Retrieved January 20, 2022, from https://www.kaspersky.com/resource-center/definitions/what-are-bots

- ↑ JavaTpoint. (n.d.). Turing test in AI. JavaTpoint. Retrieved January 28, 2022, from https://www.javatpoint.com/turing-test-in-ai

- ↑ 5.0 5.1 Zantal-Wiener, A. (2021, June 11). Where do bots come from? A brief history. HubSpot. Retrieved January 21, 2022, from https://blog.hubspot.com/marketing/where-do-bots-come-from

- ↑ Oppy, G., & Dowe, D. (2003, April 9). The Turing Test. In The Stanford Encyclopedia of Philosophy. Metaphysics Research Lab, Stanford University. Retrieved January 21, 2022, from https://plato.stanford.edu/entries/turing-test/

- ↑ Adamopoulou, E., & Moussiades, L. (2020). Chatbots: History, technology, and applications. Machine Learning with Applications, 2, 1–3. https://doi.org/10.1016/j.mlwa.2020.100006

- ↑ Wood, D. (2020, August 28). What is the history of chatbots? YakBots. Retrieved January 21, 2022, from https://yakbots.com/what-is-the-history-of-chatbots/

- ↑ Gillis, A. S. (n.d.). Bot. In WhatIs.com dictionary. Retrieved January 21, 2022, from https://whatis.techtarget.com/definition/bot-robot

- ↑ 10.0 10.1 Knecht, T. (2021, May 4). A brief history of bots and how they've shaped the Internet today. Abusix. Retrieved January 21, 2022, from https://abusix.com/resources/botnets/a-brief-history-of-bots-and-how-theyve-shaped-the-internet-today/

- ↑ The History of SEO. (n.d.). Short history of early search engines. The History of SEO. Retrieved January 21, 2022, from https://www.thehistoryofseo.com/The-Industry/Short_History_of_Early_Search_Engines.aspx

- ↑ Joshi, N. (2020, February 23). Choosing between rule-based bots and AI Bots. Forbes. Retrieved January 22, 2022, from https://www.forbes.com/sites/cognitiveworld/2020/02/23/choosing-between-rule-based-bots-and-ai-bots/

- ↑ Nanda, D., Wadhwa, P., Singh, S., & Kumar, D. (2014). Botnet: Lifecycle, architecture and detection model. International Journal of Latest Technology in Engineering, Management & Applied Science, 3(3), 28–29. https://doi.org/10.51583/ijltemas

- ↑ Techopedia (2017, January 4). Botnet. In Techopedia dictionary. Retrieved January 22, 2022, from https://www.techopedia.com/definition/384/botnet

- ↑ Norton. (2019, August 12). What is a botnet? Norton. Retrieved January 22, 2022, from https://us.norton.com/internetsecurity-malware-what-is-a-botnet.html

- ↑ 17.0 17.1 Fortinet. (n.d.). What is a botnet? Fortinet. Retrieved January 22, 2022, from https://www.fortinet.com/resources/cyberglossary/what-is-botnet

- ↑ Imperva. (2020, September 24). Bots. Imperva. Retrieved January 23, 2022, from https://www.imperva.com/learn/application-security/what-are-bots/

- ↑ Newberg, M. (2017, March 10). As many as 48 million Twitter accounts aren't people, says study. CNBC. Retrieved January 23, 2022, from https://www.cnbc.com/2017/03/10/nearly-48-million-twitter-accounts-could-be-bots-says-study.html

- ↑ 20.0 20.1 Cloudflare. (n.d.). What is a web crawler? | How web spiders work. Cloudflare. Retrieved January 23, 2022, from https://www.cloudflare.com/learning/bots/what-is-a-web-crawler/

- ↑ Sabin, J. (2020, January 7). Intro to chatbots. Capacity. Retrieved February 9, 2022, from https://capacity.com/chatbots/intro-to-chatbots/

- ↑ Cloudflare. (n.d.). What is a chatbot? Cloudflare. Retrieved February 9, 2022, from https://www.cloudflare.com/learning/bots/what-is-a-chatbot/

- ↑ Google. (2021, December 4). Googlebot. Google Developers. Retrieved January 24, 2022, from https://developers.google.com/search/docs/advanced/crawling/googlebot

- ↑ Wikipedia:Bots. (2022, January 4). In Wikipedia. https://en.wikipedia.org/wiki/Wikipedia:Bots

- ↑ Intercom. (n.d.). Custom bots. Intercom. Retrieved February 10, 2022, from https://www.intercom.com/customizable-bots

- ↑ Intercom. (n.d.). Custom bots. Intercom. Retrieved February 10, 2022, from https://www.intercom.com/drlp/customizable-bots

- ↑ 27.0 27.1 Imperva. (n.d.). CAPTCHA. Imperva. Retrieved January 25, 2022, from https://www.imperva.com/learn/application-security/what-is-captcha/

- ↑ 28.0 28.1 28.2 Imperva. (2021). Bad bot report 2021: The pandemic of the Internet. https://www.imperva.com/resources/resource-library/reports/bad-bot-report/

- ↑ Cloudflare. (n.d.). How CAPTCHAs work | What does CAPTCHA mean? Cloudflare. Retrieved January 25, 2022, from https://www.cloudflare.com/learning/bots/how-captchas-work/

- ↑ Cloudflare. (n.d.). What is robots.txt? | How a robots.txt file works. Cloudflare. Retrieved January 25, 2022, from https://www.cloudflare.com/learning/bots/what-is-robots.txt/

- ↑ Imperva. (n.d.). Distributed denial of service (DDoS). Imperva. Retrieved January 26, 2022, from https://www.imperva.com/learn/ddos/denial-of-service/

- ↑ Cloudflare. (n.d.). Famous DDoS attacks | The largest DDoS attacks of all time. Cloudflare. Retrieved January 26, 2022, from https://www.cloudflare.com/learning/ddos/famous-ddos-attacks/

- ↑ Cloudflare. (n.d.). What is a DDoS attack? Cloudflare. Retrieved January 26, 2022, from https://www.cloudflare.com/learning/ddos/what-is-a-ddos-attack/

- ↑ Cloudflare. (n.d.). How do layer 3 DDoS attacks work? | L3 DDoS. Cloudflare. Retrieved January 26, 2022, from https://www.cloudflare.com/learning/ddos/layer-3-ddos-attacks/

- ↑ Techopedia (n.d.). Spamming. In Techopedia dictionary. Retrieved January 26, 2022, from https://www.techopedia.com/definition/23763/spamming

- ↑ 36.0 36.1 Cloudflare. (n.d.). What is a spam bot? | How spam comments and spam messages spread. Cloudflare. Retrieved January 26, 2022, from https://www.cloudflare.com/learning/bots/what-is-a-spambot/

- ↑ Okta. (n.d.). What is a spam bot? Definition & defenses. Okta. Retrieved January 26, 2022, from https://www.okta.com/identity-101/spam-bot/

- ↑ Netacea. (2021, October 7). What are scalper bots? Netacea. Retrieved January 26, 2022, from https://www.netacea.com/glossary/scalper-bots/

- ↑ 39.0 39.1 Himelein-Wachowiak, M., Giorgi, S., Devoto, A., Rahman, M., Ungar, L., Schwartz, H. A., Epstein, D. H., Leggio, L., & Curtis, B. (2021). Bots and misinformation spread on social media: Implications for COVID-19. Journal of Medical Internet Research, 23(5), 5–9. https://doi.org/10.2196/26933

- ↑ 40.0 40.1 University of California, Santa Barbara. (n.d.). How is fake news spread? Bots, people like you, trolls, and microtargeting. Center for Information Technology and Society. Retrieved February 7, 2022, from https://www.cits.ucsb.edu/fake-news/spread

- ↑ Shao, C., Ciampaglia, G. L., Varol, O., Yang, K.-C., Flammini, A., & Menczer, F. (2018). The spread of low-credibility content by social bots. Nature Communications, 9(1), 2. https://doi.org/10.1038/s41467-018-06930-7

- ↑ 42.0 42.1 Cloudflare. (n.d.). What is credential stuffing? | Credential stuffing vs. brute force attacks. Cloudflare. Retrieved February 8, 2022, from https://www.cloudflare.com/learning/bots/what-is-credential-stuffing/

- ↑ Imperva. (n.d.). Credential stuffing. Imperva. Retrieved February 8, 2022, from https://www.imperva.com/learn/application-security/credential-stuffing/